“The beginning

For more than 15 years I’ve built my own “Home Automation” components. Nothing fancy, though maybe back then it was. It started with some colored LED spots under my couch connected to my Ethernet via a micro controller. Later I added WiFi and 433MHz radio to control various remote switchable outlets. Some of them even were built into a light switch to control it. I even had Linux running in a light switch for some time. Then came my MagicMirror and with it the need for some presence detection based on WiFi. And so on, just to give you an introduction as to where this is coming from.

At one point I wanted to control those not only via smartphone, but also with my voice! 5 years ago my setup for that consisted of some ARM-based SBC and an USB microphone. I used Jasper, but of course I used “Jarvis” as a wake-word well before Mark Zuckerberg had the same idea :). But… it was horrible, you had to scream through the room to have a 25% chance of being understood. Clearly the microphone was to blame, but I hadn’t heard of microphone arrays yet. That was until I had a closer look at Amazon Echo’s technology. However, my “Home Automation” was all locally in-house, and my voice should stay in-house as well.

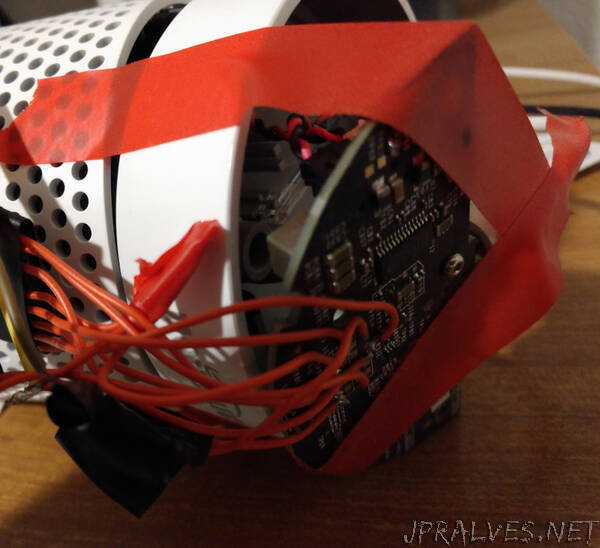

The Echo

Luckily I spotted a great blog post from F-Secure, “Alexa, are you listening”, which was in turn based on the Cook/Clinton paper. They used the debug interface of the device to gain access to it, and streamed the microphone audio signal to another device. So hey, that’s what I wanted: the Echo had a microphone array, and could recognize everything much better with it, and was “jailbreakable”! Around that time I also learned about other microphone array projects like the ReSpeaker, but my focus stayed on the Echo. So about 3 years ago I bought an Echo (second hand) and asked a friend to 3D-print me a docking station for it.”