“This project aims to solve a real problem of air quality analysis, applicable both in internal and external environments.

The primary purpose of a systematic air quality monitoring network is to distinguish between areas where pollutant levels violate an ambient air quality standard and areas where they do not. As health-based ambient air quality standards are set at levels of pollutant concentrations that result in adverse impacts on human health, evidence of levels exceeding an ambient air quality standard in an area requires a public air quality agency to mitigate the corresponding pollutant. In other words, strategies, technologies, and regulations need to be developed to achieve the necessary reduction in pollution.

The secondary purpose of a systematic monitoring network is to document the success of this sophisticated endeavor, either to record the rate of progress towards attaining the ambient air quality standard or to show that the standard has been achieved.

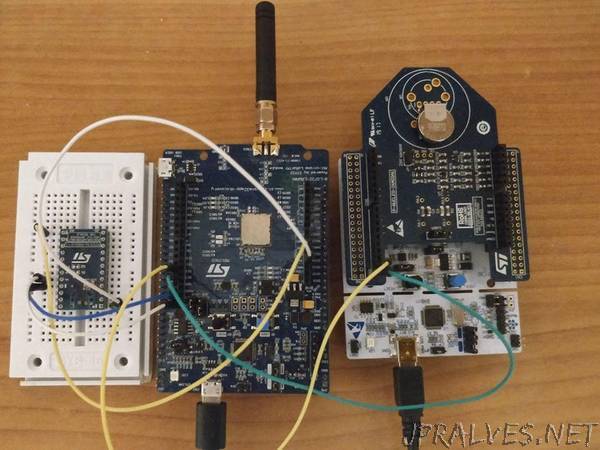

We propose a simple and economic solution. Our device has small dimensions and good precision, able to warn when the pollution level exceeds a certain threshold. It send data in real time to the cloud to be analyzed and monitored even by inexperienced staff thanks to the ease of use of the software porposed.

2.Workflow

Step 1: Take humidity, temperature and CO values from the sensor on the board.

Step 2: If the specific values exceed a certain threshold a timer starts that processes all the data in that range, if the average of these values still exceeds the expected threshold, the data are send to the neural network, otherwise the detection is reset.

Step 3: Before sending them to the cloud, these values are analyzed by the neural network to predict the value of Benzene in the air (without the installation of an additional sensor).

Step 4: After the prediction of neural network, all data (Humidity, Temperature, CO and Benzene) are sent to the server via LoRa.

Step 5: Once the data has arrived at the server, the network server sends a request to the influxDB database, saving the data permanently using the timestamp.

Step 6: Through the web data connector the data stored in InfluxDB is analyzed and managed on Tableau.”